AI Isn’t Replacing Your Team. It’s Making You More Key-Person Dependent.

The hidden risk leaders create when they adopt AI without cultural standards

TL;DR

Most companies adopt AI to reduce dependency on people.

Many end up doing the opposite.

When AI becomes personalized, unstandardized, and siloed, the organization becomes more dependent on a handful of individuals who know “how the system works.” That is key-person risk at scale.

AI does not fix misalignment. It amplifies it.

To scale performance with AI, leaders must standardize intent, decisions, and behaviours before they standardize tools.

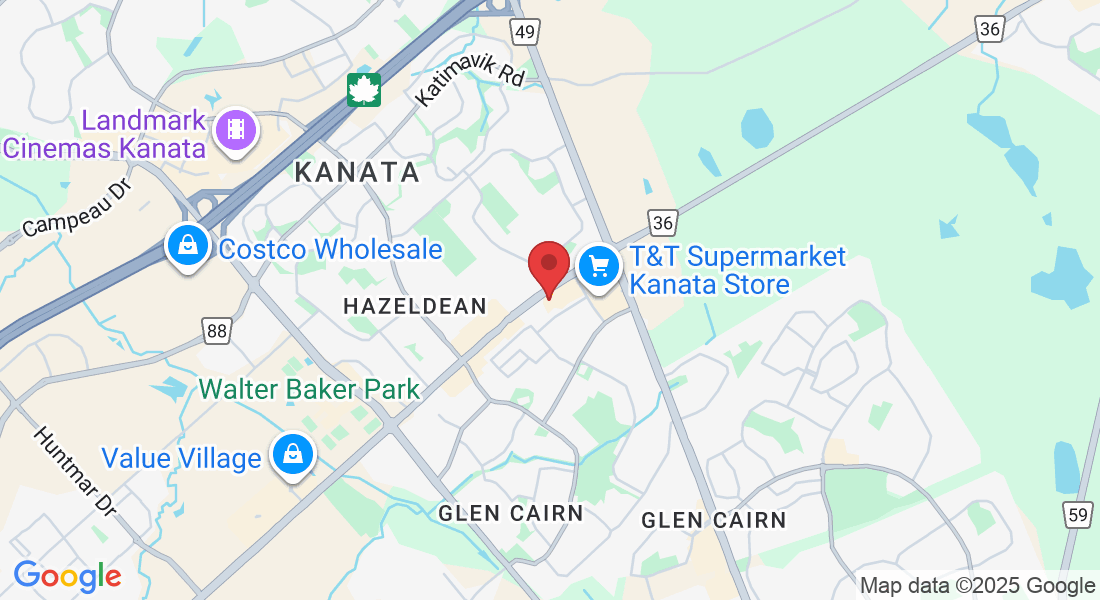

Watch the full conversation: AI and Leadership: Aligning Technology with Human Insight | Unstoppable Success Podcast

The Trap: AI Makes Your Best People Faster, Not Your Organization Better

There is a growing assumption in growth-stage companies that AI will reduce reliance on individual contributors. Automate the workflows, speed up output, reduce headcount risk.

In practice, the opposite often happens.

One person becomes a power user. They build their own prompts, their own GPTs, their own workflow shortcuts. They get results faster than everyone else. Leadership starts routing more work to them.

They become the “AI person.”

Then the “fixer.”

Then the bottleneck.

Then the risk.

AI did not replace key-person dependency.

It accelerated it.

This came up directly in my conversation on Unstoppable Success because it is one of the most consistent patterns we see in the field.

Key-Person Risk Is Not a Talent Issue. It’s a Standards Issue.

Most leaders treat key-person risk like a hiring problem.

“We need more people like them.”

“We need redundancy.”

“We need cross-training.”

Those are partial solutions.

The real problem is that the organization has not built a repeatable system for how work gets done. AI makes that gap visible.

When AI adoption is unstructured, you get:

different prompts producing different outputs

inconsistent decision-making across teams

fragmented processes that cannot be replicated

hidden knowledge that lives inside one person’s workflow

speed in pockets, confusion everywhere else

This is why organizations feel “busy” but not “better.”

AI Is an Amplifier and a Magnifying Glass

AI is powerful. It is also predictable.

It will scale whatever system you give it.

If your culture is clear, aligned, and operational, AI amplifies performance.

If your culture is vague, inconsistent, and person-dependent, AI amplifies drift.

Leaders often blame the tool when results are inconsistent.

The tool is not the problem.

The organization is not prepared for the tool.

AI does not create alignment.

It requires alignment.

The Real Sequence: People, Process, Then Tools

Many organizations adopt AI in reverse order:

Pick a tool

Let the tool define the process

Hope people adapt

That produces chaos. It also creates key-person dependency because only a few people figure out how to make it work.

The scalable sequence is:

People: clarity of intent, decision standards, behavioural expectations

Process: consistent workflows, communication loops, accountability

Tools: AI and automation layered in to reduce friction

This is why the People–Process–Performance Model™ matters. It is not theory. It is the order of operations for scaling.

The Fix: Standardize the Human Inputs Before You Scale the Machine

AI quality is driven by input quality.

Not just the prompt.

The thinking behind the prompt.

If your people do not share:

what the company stands for

how decisions should be made

what “good” looks like

how to escalate

what standards matter most

then AI outputs will vary wildly because the humans using it vary wildly.

That is why Clear Intent and Cultural Standards exist in the first place. They reduce variability in human judgment so tools can actually amplify consistent performance.

A Practical Way to Reduce AI Key-Person Risk This Week

Here is a simple leadership exercise that prevents AI fragmentation.

Step 1: Identify the “AI Power Users”

Who gets the best results fastest.

Who everyone depends on.

Who “knows how to do it.”

Step 2: Capture Their Workflow

Not just their prompts. Their decision logic.

What inputs do they collect

What then determines a good output

What constraints do they consider

What does their review process look like

Step 3: Turn It Into a Shared Standard

Create a simple internal standard:

when to use AI

what information must be included

what outputs are acceptable

what requires human review

what must be documented

Step 4: Train the Team on the Standard

AI is not a tool adoption issue.

It is a capability adoption issue.

If you want consistent results, you need consistent thinking.

The Bottom Line

AI can absolutely improve speed and output.

It can also make your organization fragile if you do not install standards first.

If your AI strategy is creating heroes, silos, and dependency, you are not scaling performance. You are scaling risk.

The goal is not to make a few people faster.

The goal is to make the organization more consistent.

AI can scale work.

Only leaders can scale the standards that make work reliable.

Book a Discovery Call

If AI adoption in your organization is creating inconsistency, key-person dependency, or operational confusion, book a discovery call. We will map where alignment breaks down across people, process, and performance, then identify the standards required to make technology an enabler instead of a multiplier of drift.

Facebook

LinkedIn